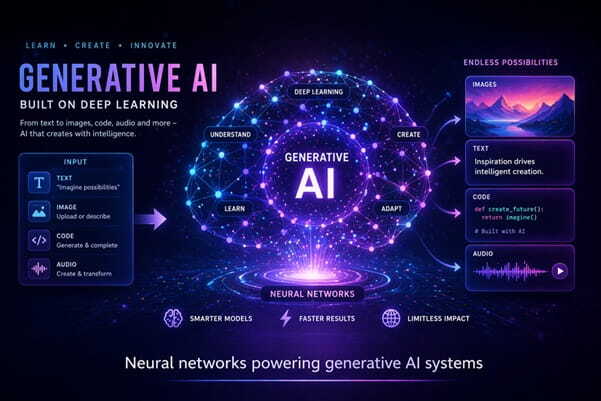

Featured Image Caption: Neural Networks Powering Generative AI Systems

Jump to read...

How Generative AI Is Redefining Deep Learning Today

Something has shifted.

Deep learning isn’t just about prediction anymore. It’s now about creation, synthesis, and interpretation at a level that feels surprisingly human, and that shift is being driven by generative AI models that don’t just analyze data but produce entirely new outputs from it.

You’ve likely seen it already. AI writing text, creating images, generating code, even simulating voices. That’s not a side feature. It’s the main story now.

And it’s evolving fast.

What Generative AI Really Means in Deep Learning

Let’s simplify it first.

Traditional deep learning models learn patterns and classify or predict outcomes based on those patterns. They’re good at recognizing. They aren’t built to invent.

Generative AI flips that idea.

Instead of answering what’s in the data, it asks what could exist based on the data, and that subtle difference changes everything because now the model learns the structure of data deeply enough to recreate variations that feel original.

Think about it like this.

A standard model might identify a cat in an image. A generative model can create a new cat image that never existed but still looks real enough to pass as one.

That’s powerful.

Core Architectures Behind the Shift

Not all generative models work the same way.

Some are designed to compete internally. Others focus on probability or sequence prediction. Each architecture brings a different flavor to how deep learning operates.

Generative Adversarial Networks

GANs introduced an interesting idea.

Two neural networks compete with each other. One creates data. The other evaluates it. Over time, both improve, and the generated output becomes almost indistinguishable from real data.

It’s like a constant game of improvement.

Variational Autoencoders

VAEs take a different route.

They compress data into a smaller representation and then reconstruct it, learning how data is distributed rather than just memorizing it. This helps in generating variations that remain realistic while still being unique.

Transformer-Based Models

This is where things accelerated quickly.

Transformer architectures changed how models process sequences, especially language, by focusing on context rather than strict order, and that allowed them to generate coherent paragraphs, long-form text, and structured responses that feel natural.

That’s why text generation suddenly feels more fluid.

Why Generative AI Feels Different Now

It’s not just better models.

It’s scale, training strategies, and access to diverse datasets that have pushed deep learning into a new phase where models don’t just perform tasks but adapt across tasks without needing retraining every time.

You’ll notice this when interacting with modern systems.

They don’t feel narrow. They feel flexible.

And that flexibility comes from learning representations that generalize across domains rather than staying locked into a single use case.

Real-World Applications That Go Beyond the Obvious

This is where things get interesting.

Generative AI isn’t just for flashy demos. It’s quietly changing workflows across industries in ways that aren’t always visible at first glance.

Content Creation

Writers now use AI for ideation, drafting, and refinement.

But here’s the nuance. It doesn’t replace creativity. It accelerates the early stages so humans can focus on tone, narrative, and depth.

Software Development

Code generation tools can suggest functions, debug issues, and even explain logic in plain English, which reduces the friction developers face when switching between thinking and execution.

Healthcare Modeling

Generative models help simulate biological structures and predict molecular interactions, allowing researchers to explore possibilities before physical testing even begins.

That shortens discovery cycles.

Design and Prototyping

Designers can generate multiple variations of a concept instantly.

Instead of starting from scratch every time, they begin with a set of possibilities and refine from there, which changes how creative workflows are structured.

What Makes These Models So Effective

It comes down to representation.

Deep learning models today capture patterns at multiple levels. They don’t just learn surface features. They learn relationships, hierarchies, and context.

That layered understanding allows generative systems to produce outputs that feel coherent, even when they are entirely new.

And yes, there’s still unpredictability.

But that unpredictability is part of the appeal. It introduces variation that static systems simply can’t replicate.

Challenges That Still Need Attention

Not everything is solved.

Generative AI introduces new complexities that weren’t as prominent in earlier deep learning systems.

Output Reliability

Sometimes, generated outputs sound correct but contain inaccuracies.

That creates a need for verification layers, especially in sensitive domains.

Data Bias

Models learn from data. If the data carries bias, the outputs reflect it.

That makes dataset curation more critical than ever.

Computational Demand

Training these models requires significant resources.

That impacts accessibility and limits who can build from scratch versus who relies on existing systems.

How to Start Learning Generative AI Practically

You don’t need to begin with complex math.

Start with concepts, then move into hands-on experimentation. That approach sticks better.

Here’s a simple path.

- Understand neural networks and how layers work

- Learn how training data influences outcomes

- Explore pre-trained models and experiment with prompts

- Try modifying outputs and observe patterns

- Dive into architecture only after building intuition

Curiosity drives progress here.

And you’ll learn faster by doing than by reading endlessly.

Future Direction: Where This Is Headed

The next phase isn’t just bigger models.

It’s smarter integration into everyday systems where AI doesn’t feel like a separate tool but becomes part of the workflow itself.

Imagine systems that understand context across tasks.

You start writing, switch to coding, move into analysis, and the system adapts without resetting. That’s where deep learning is moving, toward unified intelligence rather than isolated capabilities.

And that shift will redefine how we interact with technology daily.

Frequently Asked Questions (FAQ)

What is generative AI in deep learning in simple terms?

Generative AI refers to models that create new content such as text, images, or code by learning patterns from existing data, rather than only analyzing or predicting outcomes.

How is generative AI different from traditional machine learning?

Traditional models focus on prediction and classification, while generative models produce new data that resembles the training data but isn’t copied from it.

Do I need coding skills to learn generative AI?

You can start without coding by exploring concepts and using interactive tools, but basic programming knowledge helps when you want to build or customize models.

How accurate are generative AI models?

They can produce highly realistic outputs, but they still require validation, especially in areas where precision matters, since generated content may include subtle errors.

Can generative AI replace human creativity?

It supports creativity rather than replacing it by handling repetitive or early-stage tasks, allowing humans to focus on refinement and originality.

What industries benefit the most from generative AI?

Fields like content creation, software development, healthcare research, and design are seeing strong impact due to faster ideation and improved efficiency.

How do generative models learn patterns?

They analyze large datasets to identify relationships and structures within the data, then use that understanding to generate new outputs that follow similar patterns.

Is generative AI difficult to understand for beginners?

It can seem complex at first, but starting with basic concepts and experimenting with tools makes it much easier to grasp over time.

Leave a Reply